- 负log loss;

- binary crossentropy;

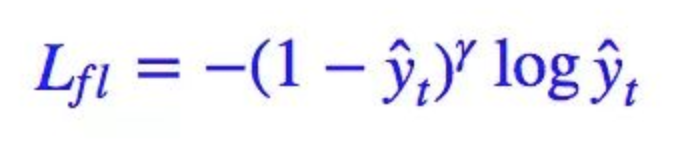

- focal loss;

网上找到的loss写的都普遍复杂,我自己稍微写的逻辑简单一点。

if inputs.is_cuda and not self.alpha.is_cuda:

self.alpha = self.alpha.cuda()

focal loss

focal loss仔细实践起来可以分为两种情况,一种是二分类(sigmoid激活)的时候,还有一种情况就是多分类(softmax激活)的时候。

二分类focal loss

class FocalLoss(nn.Module):

""" -[alpha*y*(1-p)^gamma*log(p)+(1-alpha)(1-y)*p^gamma*log(1-p)] loss"""

def __init__(self, gamma, alpha=None , onehot=False):

super(FocalLoss, self).__init__()

self.alpha = alpha

self.gamma = gamma

self.onehot = onehot

def forward(self, inputs, targets):

"""

:param input: onehot

:param target: 默认是onehot以后

:return:

"""

N = inputs.size(0)

C = inputs.size(1)

inputs = torch.clamp(inputs, min=0.001, max=1.0) ##将一个张量中的数值限制在一个范围内,如限制在[0.1,1.0]范围内,可以避免一些运算错误,如预测结果q中元素可能为0

if inputs.is_cuda and not self.alpha.is_cuda:

self.alpha = self.alpha.cuda()

if not self.onehot:

class_mask = inputs.data.new(N, C).fill_(0)

class_mask = Variable(class_mask)

ids = targets.view(-1, 1)

class_mask.scatter_(1, ids.data, 1.)

targets = class_mask

pos_sample_loss_matrix = -targets * (torch.pow((1 - inputs), self.gamma)) * inputs.log() ## 正样本的loss

# mean_pos_sample_loss = pos_sample_loss_matrix.sum() / targets.sum()

neg_sample_loss_matrix = -(targets == 0).float() * (torch.pow((inputs), self.gamma)) * (1 - inputs).log() ## 负样本的loss

# mean_neg_sample_loss = pos_sample_loss_matrix.sum() / targets.sum()

if self.alpha:

return (self.alpha * pos_sample_loss_matrix + (1 - self.alpha) * neg_sample_loss_matrix).sum() / (N * C)

else:

return (pos_sample_loss_matrix + neg_sample_loss_matrix).sum() / (N * C)

多分类focal loss

class FocalLoss(nn.Module):

""" -[y*(1-p)^gamma*log(p) loss

softmax激活输入的foacl loss。

"""

def __init__(self, gamma, onehot=False):

super(FocalLoss, self).__init__()

self.gamma = gamma

self.onehot = onehot

def forward(self, inputs, targets):

"""

:param input: onehot

:param target: 默认是onehot以后

:return:

"""

inputs = torch.clamp(inputs, min=0.001, max=1.0) ##将一个张量中的数值限制在一个范围内,如限制在[0.1,1.0]范围内,可以避免一些运算错误,如预测结果q中元素可能为0

if not self.onehot:

N = inputs.size(0)

C = inputs.size(1)

class_mask = inputs.data.new(N, C).fill_(0)

class_mask = Variable(class_mask)

ids = targets.view(-1, 1)

class_mask.scatter_(1, ids.data, 1.)

targets = class_mask

pos_sample_loss_matrix = -targets * (torch.pow((1 - inputs), self.gamma)) * inputs.log() ## 正样本的loss

# mean_pos_sample_loss = pos_sample_loss_matrix.sum() / targets.sum()

## 默认输出均值

## 这里不能直接求mean,

# 因为整个矩阵还是原来的输入大小的,

# 求loss应该是除以label中有目标的总数。

return pos_sample_loss_matrix / targets.sum()

代码

- NegtiveLogLoss

- BinaryCrossEntropy

import torch

import torch.nn as nn

from torch.autograd import Variable

class NegtiveLogLoss(nn.Module):

""" -log(p) loss"""

def __init__(self, onehot=False):

super(NegtiveLogLoss, self).__init__()

self.onehot = onehot

def forward(self, inputs, targets):

"""

:param input: onehot

:param target: 默认是onehot以后

:return:

"""

inputs = torch.clamp(inputs, min=0.001, max=1.0) ## 将一个张量中的数值限制在一个范围内,如限制在[0.1,1.0]范围内,可以避免一些运算错误,如预测结果q中元素可能为0

if not self.onehot:

N = inputs.size(0)

C = inputs.size(1)

class_mask = inputs.data.new(N, C).fill_(0)

class_mask = Variable(class_mask)

ids = targets.view(-1, 1)

class_mask.scatter_(1, ids.data, 1.)

targets = class_mask

loss_matrix = -targets * inputs.log() ## 对预测的矩阵里面的每个元素做log,

## 然后乘以one hot的label,也就是说获得1位置的值了。

## 这时候还是个矩阵,还没有计算均值

## 默认输出均值

return loss_matrix.sum() / targets.sum() ## 这里不能直接求mean,

# 因为整个矩阵还是原来的输入大小的,

# 求loss应该是除以label中有目标的总数。

class BinaryCrossEntropy(nn.Module):

""" -(ylog(p)+(1-y)log(1-p) loss"""

def __init__(self, alpha=None, onehot=False):

super(BinaryCrossEntropy, self).__init__()

self.alpha = alpha

self.onehot = onehot

def forward(self, inputs, targets):

"""

:param input: onehot

:param target: 默认是onehot以后

:return:

"""

N = inputs.size(0)

C = inputs.size(1)

inputs = torch.clamp(inputs, min=0.001, max=1.0) ##将一个张量中的数值限制在一个范围内,如限制在[0.1,1.0]范围内,可以避免一些运算错误,如预测结果q中元素可能为0

if not self.onehot:

class_mask = inputs.data.new(N, C).fill_(0)

class_mask = Variable(class_mask)

ids = targets.view(-1, 1)

class_mask.scatter_(1, ids.data, 1.)

targets = class_mask

pos_sample_loss_matrix = -targets * inputs.log() ## 正样本的loss

# mean_pos_sample_loss = pos_sample_loss_matrix.sum() / targets.sum()

neg_sample_loss_matrix = -(targets == 0).float() * (1 - inputs).log() ## 负样本的loss

# mean_neg_sample_loss = pos_sample_loss_matrix.sum() / targets.sum()

if self.alpha:

return (self.alpha*pos_sample_loss_matrix + (1-self.alpha)*neg_sample_loss_matrix).sum() / (N * C)

else:

return (pos_sample_loss_matrix + neg_sample_loss_matrix).sum() / (N * C)

引用

- http://kodgv.xyz/2019/04/22/%E7%A5%9E%E7%BB%8F%E7%BD%91%E7%BB%9C/FocalLoss%E9%92%88%E5%AF%B9%E4%B8%8D%E5%B9%B3%E8%A1%A1%E6%95%B0%E6%8D%AE/